Jun 09, 2025

Protocol for Developing Integration Frameworks for Automated Evidence Synthesis in Implementation Science

- Nabil Zary1

- 1Mohammed Bin Rashid University of Medicine and Health Sciences

Protocol Citation: Nabil Zary 2025. Protocol for Developing Integration Frameworks for Automated Evidence Synthesis in Implementation Science. protocols.io https://dx.doi.org/10.17504/protocols.io.eq2lyqk1pvx9/v1

License: This is an open access protocol distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited

Protocol status: Working

We use this protocol and it's working

Created: June 08, 2025

Last Modified: June 09, 2025

Protocol Integer ID: 219771

Keywords: Implementation science, evidence synthesis, artificial intelligence, machine learning, systematic reviews, framework development, technology integration, automated screening, large language models, health informatics, knowledge translation, EPIS framework, human-AI collaboration, evidence-based practice, quality assurance, stakeholder engagement, equity safeguards, organizational implementation, healthcare technology, research methodology, evidence synthesis technologies within implementation science practice, automated evidence synthesis in implementation science, implementation science across diverse organizational context, implementation science, implementation science practice, careful consideration of implementation science, automated evidence synthesis technology, specialized needs of implementation science, implementation science perspective evaluation, preserving implementation science, traditional evidence synthesis method, automated evidence synthesis, systematic methodology, combining systematic li

Disclaimer

This protocol describes a methodology for conceptual framework development rather than an empirical research study design. The resulting frameworks require subsequent validation through pilot implementations and effectiveness studies to demonstrate practical utility and real-world applicability. Users should recognize that artificial intelligence technologies evolve rapidly, necessitating periodic framework updates as technological capabilities advance beyond current performance parameters.

The protocol addresses implementation science applications specifically and may require substantial adaptation for other research synthesis contexts. Organizational, cultural, and resource factors significantly influence framework applicability across different institutional and geographical settings. Organizations considering protocol implementation should carefully assess their technological infrastructure, workforce capabilities, and stakeholder readiness before initiating framework development processes.

This methodology assumes access to comprehensive literature databases, qualified personnel with both implementation science and technology expertise, and sufficient time allocation for thorough framework development and validation activities. Resource-constrained environments may require protocol modifications or alternative approaches to achieve comparable outcomes.

The protocol acknowledges ongoing ethical considerations regarding artificial intelligence applications in research contexts, including bias mitigation, transparency requirements, and equitable access to technological capabilities. Framework developers should incorporate relevant institutional review board guidance and ethical standards appropriate to their organizational context and intended framework applications.

Users should note that successful framework implementation depends on continued stakeholder engagement, organizational commitment to quality assurance processes, and adaptive management approaches that respond to emerging challenges and technological developments in automated evidence synthesis applications.

Abstract

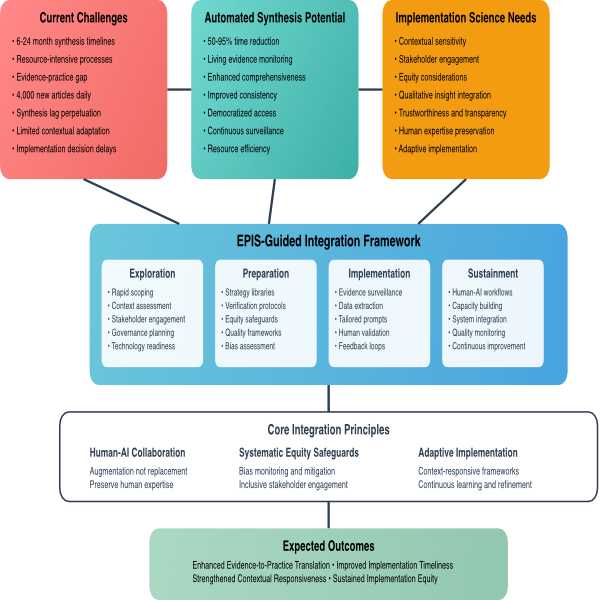

This protocol provides a systematic methodology for developing comprehensive frameworks that guide the responsible integration of automated evidence synthesis technologies within implementation science practice. The approach addresses the critical challenge of bridging the evidence-to-practice gap through technological innovation while preserving implementation science's core values of contextual sensitivity, stakeholder engagement, and equity.

The methodology employs a multi-phase approach combining systematic literature analysis, implementation science perspective evaluation, theoretical framework application, integration strategy development, and validation processes. The protocol is designed to produce actionable frameworks that balance automation's efficiency capabilities with the nuanced requirements of implementation science across diverse organizational contexts.

Traditional evidence synthesis methods require six to twenty-four months to complete while biomedical literature expands by approximately four thousand articles daily, creating an unsustainable temporal mismatch between evidence generation and implementation decision-making needs. Recent advances in artificial intelligence and large language models demonstrate capabilities for reducing synthesis time by fifty to ninety-five percent while enabling continuous evidence monitoring, yet their integration requires careful consideration of implementation science's unique epistemological and practical requirements.

This protocol addresses the gap between generic technology adoption approaches and the specialized needs of implementation science by providing structured guidance for framework development that maintains fidelity to field values while leveraging demonstrated technological capabilities. The resulting frameworks support organizational decision-making regarding appropriate automation levels, human oversight requirements, quality assurance protocols, and equity safeguarding mechanisms necessary for responsible technology adoption.

Image Attribution

Nabil Zary (developed using Figma - academic license)

Guidelines

Successful protocol execution requires systematic adherence to established quality control measures throughout all development phases. Research teams should implement independent dual review processes for literature screening, data extraction, and framework development activities to ensure methodological rigor and minimize the introduction of bias. Establish minimum inter-rater reliability thresholds of eighty percent agreement with documented conflict resolution procedures for addressing discrepant assessments between reviewers.

Maintain comprehensive documentation standards throughout the protocol execution period to support transparency, replicability, and audit trail requirements. Document all methodological decisions, including search strategy modifications, inclusion criteria adjustments, and framework development rationales, with sufficient detail to enable independent verification and protocol adaptation by other research teams.

Establish regular validation checkpoints at critical development milestones to ensure alignment with implementation science principles and practical applicability requirements. Include internal quality assessments conducted by the research team and external expert validation involving implementation science specialists and potential end-users who can evaluate framework comprehensiveness and feasibility.

Implement systematic bias minimization procedures throughout all protocol phases, including explicit consideration of diverse perspectives and potential conflicts of interest that might influence literature selection, evidence interpretation, or framework development decisions. Research teams should acknowledge and address their disciplinary backgrounds and institutional contexts that may shape framework development approaches.

Materials

Literature Access Resources

Comprehensive database subscriptions including PubMed, Cochrane Library, Embase, and specialized implementation science journals with access to publications from 2020 through thepresent. Institutional library privileges or commercial database access for peer-reviewed literature retrieval and full-text document acquisition.

Analytical and Collaboration Tools

Qualitative data analysis software supporting thematic analysis and framework development processes. Reference management systems for literature organization and citation tracking throughout the protocol execution period. Collaboration platforms enabling multi-reviewer coordination and stakeholder consultation processes.

Technical Infrastructure

Visualization software for framework development and presentation materials creation. Survey platforms for stakeholder feedback collection and expert review coordination. Document management systems supporting version control and collaborative editing throughout framework development phases.

Human Resources

Principal investigator with advanced implementation science expertise and familiarity with systematic review methodologies. Research team members with literature review capabilities and analytical skills for evidence synthesis processes. Access to implementation science experts and potential end-users for validation and feedback processes.

Troubleshooting

Safety warnings

This protocol produces conceptual frameworks requiring subsequent empirical validation through pilot implementations and comparative effectiveness studies to demonstrate real-world utility and identify necessary refinements. Organizations should not assume framework effectiveness without systematic testing in actual implementation contexts with appropriate outcome measurement and stakeholder feedback collection.

Artificial intelligence technologies evolve rapidly, with capabilities advancing significantly beyond current performance parameters assessed in existing literature. Framework developers must recognize that technology assessments conducted during protocol execution may quickly become outdated, necessitating regular updates and modifications to maintain relevance and accuracy in rapidly changing technological landscapes.

The protocol focuses on implementation science applications and may require substantial adaptation for other research synthesis contexts or disciplinary areas. Direct transferability to clinical research, public health, or educational settings cannot be assumed without carefully considering domain-specific requirements and stakeholder needs that differ from implementation science contexts.

Resource requirements for comprehensive protocol execution may exceed the capabilities of smaller organizations or individual researchers. Inadequate access to literature databases, qualified personnel, or stakeholder networks significantly compromises framework development quality and practical applicability. Organizations should conduct realistic resource assessments before protocol initiation rather than discovering limitations during execution phases.

Framework development outcomes depend heavily on organizational context, stakeholder engagement quality, and institutional readiness for technology adoption. Results achieved in well-resourced academic settings may not translate effectively to community-based organizations, resource-constrained healthcare systems, or international contexts with different technological infrastructure and regulatory environments.

Ethics statement

Framework development activities involve analysis of published literature and stakeholder consultation processes that typically do not require formal institutional review board approval. However, organizations should consult with their institutional ethics review processes to determine local requirements and ensure compliance with applicable research ethics standards.

Stakeholder engagement activities during validation phases must respect participant autonomy, maintain confidentiality of organizational information, and ensure voluntary participation without coercion or undue influence. Research teams should provide clear information about consultation purposes, time commitments, and intended use of feedback while obtaining appropriate consent for participation in framework development processes.

The protocol acknowledges ongoing ethical considerations regarding artificial intelligence applications in research contexts, including algorithmic bias, transparency requirements, equitable access to technological capabilities, and potential impacts on vulnerable populations. Framework developers bear responsibility for incorporating bias mitigation strategies, promoting inclusive stakeholder representation, and addressing equity implications throughout development processes.

Research teams should recognize their professional obligations to promote responsible technology adoption that enhances rather than undermines implementation science values of contextual sensitivity, stakeholder engagement, and equitable practice. Framework recommendations must balance efficiency gains with preservation of human expertise and community input essential for ethical implementation science practice.

Organizations implementing resulting frameworks must establish appropriate governance structures ensuring continued ethical oversight of automated synthesis applications, including monitoring for bias amplification, maintaining transparency in technology use, and preserving meaningful stakeholder participation in evidence synthesis processes affecting their communities and interests.

Before start

Confirm access to comprehensive literature databases, including PubMed, Cochrane Library, Embase, and specialized implementation science journals with full-text retrieval capabilities for publications from 2020 through the present. Verify that institutional library privileges or commercial database subscriptions provide adequate coverage of both implementation science and artificial intelligence literature necessary for comprehensive evidence synthesis.

Assemble a qualified research team with demonstrated expertise in implementation science theory and practice, systematic review methodologies, and a basic understanding of artificial intelligence applications in healthcare contexts. Principal investigators should possess advanced knowledge of implementation frameworks and evidence synthesis approaches, while team members require competencies in literature review processes and analytical techniques supporting framework development.

Secure necessary analytical tools and software platforms, including qualitative data analysis applications, reference management systems, collaboration platforms for multi-reviewer coordination, and visualization software for framework presentation development. Establish document management systems supporting version control and collaborative editing throughout the extended framework development period.

Identify and engage potential stakeholder networks, including implementation science researchers, healthcare practitioners, technology specialists, organizational leaders, and community representatives who can provide expert review and feedback during validation phases. Confirm stakeholder availability and willingness to participate in structured consultation before initiating framework development activities.

Allocate adequate time resources with principal investigator commitment of twenty to thirty hours weekly and additional research team availability for intensive literature review and analysis phases. Establish realistic timeline expectations, acknowledging that thorough framework development requires sustained effort over six to eight months, depending on organizational capacity and stakeholder engagement complexity.

Protocol Overview and Objectives

Study Title

Automating Evidence Synthesis in Implementation Science: A Framework for Navigating Benefits and Challenges

Primary Objective

To develop a comprehensive integration framework for incorporating automated evidence synthesis methods into implementation science practices while maintaining field-specific values of contextual sensitivity, stakeholder engagement, and equity.

Secondary Objectives

- Systematically analyze empirical evidence on automated synthesis performance capabilities and limitations.

- Assess automated synthesis methods against implementation science requirements across nine critical dimensions.

- Apply the EPIS framework to structure phase-specific implementation guidance.

- Develop human-AI collaboration models that enhance rather than replace human expertise.

- Create systematic equity safeguards for responsible technology integration.

Study Design

Multi-phase framework development study combining systematic evidence synthesis, theoretical application, and implementation science principles.

Literature Analysis and Evidence Synthesis

Search Strategy

Timeline: October 2024 - May 2025

Databases:

- PubMed/MEDLINE

- Scopus

- IEEE Xplore

- ACM Digital Library

- Preprint Journals

Search Terms:

- ("artificial intelligence" OR "machine learning" OR "large language models") AND ("systematic review" OR "evidence synthesis" OR "meta-analysis")

- ("automated screening" OR "automated extraction") AND ("systematic review" OR "evidence synthesis")

- ("implementation science" OR "knowledge translation") AND ("artificial intelligence" OR "automation")

Inclusion Criteria

- Peer-reviewed articles published 2020-2025

- Studies presenting quantitative performance metrics for AI-enhanced synthesis

- Research on automated screening, data extraction, living reviews, or synthesis accuracy

- English language publications

- Empirical studies with measurable outcomes

Exclusion Criteria

- Opinion pieces or editorials without empirical data

- Studies focusing solely on clinical prediction models

- Research without performance metrics or validation data

- Non-English publications

- Studies published before 2020

Data Extraction Process

Extract quantitative performance metrics

- Time reduction percentages

- Accuracy measures (sensitivity, specificity, precision, recall)

- Resource utilization comparisons

- Cost-effectiveness data

Document study characteristics

- Sample sizes

- Methodology used

- Validation approaches

- Comparison methods

Identify limitations and challenges

- Contextual sensitivity issues

- Reference accuracy problems

- Equity concerns

- Technical limitations

Implementation Science Requirements Assessment

Dimensional Analysis Framework

Define nine critical dimensions

- Time Efficiency

- Comprehensiveness

- Consistency

- Contextual Sensitivity

- Trustworthiness

- Stakeholder Engagement

- Human Expertise Preservation

- Equity Considerations

- Living Evidence Capabilities

Assess requirements for each dimension

- Document implementation science field requirements

- Identify minimum acceptable performance thresholds

- Define quality indicators for each dimension

Comparative Performance Analysis

Rate automated synthesis performance (1-5 scale)

- Very Poor (1)

- Poor (2)

- Adequate (3)

- Good (4)

- Excellent (5)

Rate implementation science requirements (1-5 scale)

- Same scale as above

- Based on field standards and expert consensus

Identify gaps and strengths

- Calculate performance gaps (requirement score - current performance)

- Prioritize areas needing attention

- Document areas of strength for leverage

EPIS Framework Application

Phase Mapping Process

Exploration Phase Analysis

- Map automated synthesis opportunities in needs assessment

- Identify stakeholder engagement requirements

- Define governance planning needs

- Assess organizational readiness factors

Preparation Phase Planning

- Outline strategy development processes

- Define infrastructure requirements

- Establish quality assurance protocols

- Plan equity safeguard implementation

Implementation Phase Guidelines

- Design continuous monitoring systems

- Create adaptive refinement processes

- Establish human oversight protocols

- Define performance validation methods

Sustainment Phase Framework

- Plan capacity building initiatives

- Design long-term governance structures

- Create system integration protocols

- Establish continuous improvement processes

Integration Strategy Development

Human-AI Collaboration Model Design

- Define task allocation principles

- Create decision-making workflows

- Establish handoff protocols

- Design feedback mechanisms

Equity Safeguard Integration

- Develop bias monitoring protocols

- Create inclusive engagement strategies

- Design representative participation frameworks

- Establish equity assessment metrics

Multi-Dimensional Comparative Assessment

Benefits and Concerns Analysis

Document empirical benefits

- Time efficiency gains (50-95% reduction documented)

- Comprehensiveness improvements

- Consistency enhancements

- Living evidence capabilities

Identify documented concerns

- Contextual sensitivity limitations

- Trustworthiness issues (13.8% reference accuracy)

- Equity and bias risks

- Stakeholder engagement challenges

Evidence Quality Assessment

Rate evidence strength

- Strong empirical support

- Moderate empirical support

- Limited empirical support

- Theoretical concern only

Assess implementation relevance

- Directly applicable to implementation science

- Generally applicable with adaptation

- Limited applicability

- Not applicable

Framework Validation Design

Validation Metrics Development

Individual recommendation metrics

- Time reduction targets

- Accuracy thresholds

- Stakeholder satisfaction measures

- Equity indicators

Phase-level outcomes

- Needs assessment quality

- Strategy development effectiveness

- Implementation monitoring capabilities

- Sustainment success rates

Framework-level system outcomes

- Evidence-to-practice gap reduction

- Implementation equity improvements

- Stakeholder capacity development

- Long-term sustainability measures

Pilot Implementation Planning

Design pilot study framework

- Define pilot organization criteria

- Establish baseline measurements

- Create intervention protocols

- Plan outcome assessments

Comparative effectiveness study design

- Traditional vs. automated synthesis comparison

- Randomized or quasi-experimental design

- Primary and secondary outcome measures

- Statistical analysis plan

Framework Documentation and Dissemination

Framework Documentation

Create visual framework representations

- EPIS-guided integration diagram

- Human-AI collaboration workflow

- Multi-dimensional performance radar chart

- Technology infrastructure architecture

Develop implementation guidance documents

- Phase-specific checklists

- Decision-making tools

- Quality assurance templates

- Stakeholder engagement guides

Stakeholder Engagement Strategy

Identify key stakeholder groups

- Implementation scientists and researchers

- Healthcare organizations and practitioners

- Technology developers and vendors

- Policy makers and funders

- Community representatives

Design engagement activities

- Expert review panels

- Stakeholder feedback sessions

- Professional conference presentations

- Policy briefings

Quality Assurance and Risk Management

Quality Control Measures

Establish review processes

- Expert panel review of framework components

- Methodological quality assessments

- Internal consistency checks

- External validation planning

Risk mitigation strategies

- Bias monitoring protocols

- Equity safeguard implementation

- Technical limitation acknowledgment

- Stakeholder concern addressing

Ethical Considerations

Address ethical implications

- Informed consent for technology use

- Data privacy and security

- Algorithmic transparency

- Equity and justice considerations

Expected Outcomes and Impact

Focus on Primary Deliverables

- Comprehensive integration framework

- Phase-specific implementation guidance

- Human-AI collaboration model

- Equity safeguard protocols

Highlight Anticipated Impact

- Accelerated evidence-to-practice translation

- Improved synthesis efficiency (50-95% time reduction)

- Enhanced stakeholder engagement processes

- Strengthened equity considerations in technology adoption

Plan for Future Research Directions

- Empirical framework validation studies

- Comparative effectiveness research

- Long-term impact assessments

- PFuture Research DirectionsegrationP

Protocol references

Moullin, J. C., Dickson, K. S., Stadnick, N. A., Rabin, B., & Aarons, G. A. (2019). Systematic review of the exploration, preparation, implementation, sustainment (EPIS) framework. Implementation Science, 14(1), 1-16. DOI: 10.1186/s13012-019-0876-4

Damschroder, L. J., Reardon, C. M., Widerquist, M. A. O., & Lowery, J. (2022). The updated Consolidated Framework for Implementation Research based on user feedback. Implementation Science, 17, 75. DOI: 10.1186/s13012-022-01245-0

Powell, B. J., Waltz, T. J., Chinman, M. J., Damschroder, L. J., Smith, J. L., Matthieu, M. M., Proctor, E. K., & Kirchner, J. E. (2015). A refined compilation of implementation strategies: Results from the Expert Recommendations for Implementing Change (ERIC) project. Implementation Science, 10, 21. DOI: 10.1186/s13012-015-0209-1

Page, M.J., McKenzie, J.E., Bossuyt, P.M., et al. (2021). The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ, 372, n71. DOI: 10.1136/bmj.n71

Page, M.J., Moher, D., Bossuyt, P.M., et al. (2021). PRISMA 2020 explanation and elaboration: updated guidance and exemplars for reporting systematic reviews. BMJ, 372, n160. DOI: 10.1136/bmj.n160

Shea, B.J., Reeves, B.C., Wells, G., et al. (2017). AMSTAR 2: a critical appraisal tool for systematic reviews that include randomised or non-randomised studies of healthcare interventions, or both. BMJ, 358, j4008. DOI: 10.1136/bmj.j4008

Akl, E.A., El Khoury, R., Khamis, A.M., et al. (2023). The life and death of living systematic reviews: a methodological survey. Journal of Clinical Epidemiology, 156, 11-21. DOI: 10.1016/j.jclinepi.2023.02.005

Garritty, C., Hamel, C., Trivella, M., et al. (2024). Updated recommendations for the Cochrane rapid review methods guidance for rapid reviews of effectiveness. BMJ, 384. DOI: 10.1136/bmj-2023-076335

van de Schoot, R., et al. (2021). An open source machine learning framework for efficient and transparent systematic reviews. Nature Machine Intelligence. DOI: 10.1038/s42256-020-00287-7

Ferdinands, G., et al. (2023). Performance of active learning models for screening prioritization in systematic reviews: a simulation study. Systematic Reviews

Ames, H.M.R., et al. (2022). Accuracy and efficiency of machine learning-assisted risk-of-bias assessments in 'real-world' systematic reviews. BMC Medical Research Methodology

Qureshi, R., et al. (2023). Are ChatGPT and large language models 'the answer' to bringing us closer to systematic review automation? Systematic Reviews. DOI: 10.1186/s13643-023-02243-z

Khraisha, Q., et al. (2024). Can large language models replace humans in systematic reviews? Evaluating GPT-4's efficacy in screening and extracting data. Research Synthesis Methods

Blaizot, A., et al. (2022). Using artificial intelligence methods for systematic review in health sciences: A systematic review. Research Synthesis Methods. DOI: 10.1002/jrsm.1553

Atkins, L., Francis, J., Islam, R., O'Connor, D., Patey, A., Ivers, N., ... & Michie, S. (2017). A guide to using the Theoretical Domains Framework of behaviour change to investigate implementation problems. Implementation Science, 12, 77. DOI: 10.1186/s13012-017-0757-1

Curry, L. A., Krumholz, H. M., O'Cathain, A., Plano Clark, V. L., Cherlin, E., & Bradley, E. H. (2013). Mixed methods in biomedical and health services research. Circulation: Cardiovascular Quality and Outcomes, 6(1), 119-123. DOI: 10.1161/CIRCOUTCOMES.112.967885

Humphrey-Murto, S., Varpio, L., Wood, T. J., Gonsalves, C., Ufholz, L. A., Mascioli, K., ... & Foth, T. (2017). The use of the Delphi and other consensus group methods in medical education research: A review. Academic Medicine, 92(10), 1491-1498. DOI: 10.1097/ACM.0000000000001812

Rogers, L., De Brún, A., & McAuliffe, E. (2020). Development of an integrative coding framework for evaluating context within implementation science. BMC Medical Research Methodology, 20, 158. DOI: 10.1186/s12874-020-01118-3

Bienefeld, N., Keller, E., & Grote, G. (2024). Human-AI Teaming in Critical Care: A Comparative Analysis of Data Scientists' and Clinicians' Perspectives on AI Augmentation and Automation. Journal of Medical Internet Research, 26, e50130. DOI: 10.2196/50130

Farzaneh, N., et al. (2023). Collaborative strategies for deploying AI-based physician decision support systems: challenges and deployment approaches. npj Digital Medicine, 6, Article 137. DOI: 10.1038/s41746-023-00881-z

Asan, O., et al. (2020). Artificial Intelligence and Human Trust in Healthcare: Focus on Clinicians. Journal of Medical Internet Research, 22(6), e15154. DOI: 10.2196/15154

Labkoff, S., et al. (2024). Toward a responsible future: recommendations for AI-enabled clinical decision support. Journal of the American Medical Informatics Association, 31(11), 2730-2739. DOI: 10.1093/jamia/ocae209

Hassan, M., Kushniruk, A., & Borycki, E. (2024). Barriers to and Facilitators of Artificial Intelligence Adoption in Health Care: Scoping Review. JMIR Human Factors, 11, e48633. DOI: 10.2196/48633

Fragiadakis, G., et al. (2024). Evaluating Human-AI Collaboration: A Review and Methodological Framework. arXiv preprint arXiv:2407.19098

Niederberger, M., & Köberich, S. (2021). Coming to consensus: the Delphi technique for consensus-building in healthcare research. BMC Medical Research Methodology, 21, 112

Alonso-Coello, P., et al. (2020). A conceptual framework for an integrated approach to guideline development and quality assurance. BMC Health Services Research, 20, 987. DOI: 10.1186/s12913-020-05847-1

Smith, B., et al. (2024). Development and validation of a Physical Activity Messaging Framework: An international Delphi study. International Journal of Behavioral Nutrition and Physical Activity, 21, 45

Skivington, K., et al. (2021). A new framework for developing and evaluating complex interventions: Update of Medical Research Council guidance. BMJ, 374, n2061. DOI: 10.1136/bmj.n2061

Potthoff, S., Finch, T., Bührmann, L., et al. (2023). Towards an Implementation‐STakeholder Engagement Model (I‐STEM) for improving health and social care services. Health Expectations, 26(5), 1997-2012. DOI: 10.1111/hex.13808

Triplett, N.S., Woodard, G.S., Johnson, C., et al. (2022). Stakeholder engagement to inform evidence-based treatment implementation for children's mental health: a scoping review. Implementation Science Communications, 3, 82. DOI: 10.1186/s43058-022-00327-w

Agyei‐Manu, E., Atkins, N., Lee, B., et al. (2023). The benefits, challenges, and best practice for patient and public involvement in evidence synthesis: a systematic review and thematic synthesis. Health Expectations, 26(4), 1436-1452. DOI: 10.1111/hex.13787

Grindell, C., Coates, E., Croot, L., & O'Cathain, A. (2022). The use of co-production, co-design and co-creation to mobilise knowledge in the management of health conditions: a systematic review. BMC Health Services Research, 22, 877. DOI: 10.1186/s12913-022-08079-y

Byiringiro, S., Aggarwal, R., Mupenda, B., et al. (2022). Transitioning a community research advisory council to virtual meetings: lessons learned during the COVID-19 pandemic and beyond. Journal of Clinical and Translational Science, 6(1), e121. DOI: 10.1017/cts.2022.457

Holcomb, J., Ferguson, G.M., Sun, J., et al. (2022). Stakeholder Engagement in Adoption, Implementation, and Sustainment of an Evidence-Based Intervention to Increase Mammography Adherence Among Low-Income Women. Journal of Cancer Education, 37, 1486-1495. DOI: 10.1007/s13187-021-01988-2

Acknowledgements

This protocol development benefited from insights gained by examining existing implementation science frameworks and technology adoption methodologies. The approach builds upon established systematic review standards and implementation science theoretical foundations while addressing the unique challenges of integrating emerging technologies within evidence-based practice contexts.