Aug 27, 2025

Bioinformatic workflow for Nanopore metabarcoding analysis

- Juan M Zurita-Artaloitia1,

- Ilse Patricia Salinas Peralta2,

- Alejandra Escobar-Zepeda3,

- Jérôme rleyen2,

- Alejandro Sanchez2,

- Liliana Pardo-López1

- 1Instituto de Biotecnología - UNAM;

- 2UUSMB/UNAM;

- 3EBI

Protocol Citation: Juan M Zurita-Artaloitia, Ilse Patricia Salinas Peralta, Alejandra Escobar-Zepeda, Jérôme rleyen, Alejandro Sanchez, Liliana Pardo-López 2025. Bioinformatic workflow for Nanopore metabarcoding analysis. protocols.io https://dx.doi.org/10.17504/protocols.io.eq2lywmxqvx9/v1

License: This is an open access protocol distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited

Protocol status: Working

We use this protocol and it's working

Created: October 17, 2024

Last Modified: August 27, 2025

Protocol Integer ID: 110247

Keywords: nanopore, barcoding, 16s, diversity, bioinformatic workflow for nanopore, oxford nanopore, nanopore, metabarcoding analysis, barcoding experiment, bioinformatic workflow, alpha diversity analysis between sample, alpha diversity analysis, sequencing run, rarefaction curve

Funders Acknowledgements:

CONACYT Scholarship

Grant ID: 1270074

LANCO-CONAHCyT

Grant ID: APOYOSLNC-2023-80

DGAPA-PAPIIT

Grant ID: IG200223

Disclaimer

None

Abstract

This protocol is aimed to help scientists process an Oxford Nanopore sequencing run from a 16S meta-barcoding experiment to produce a rarefaction curve, an alpha diversity analysis between samples and a stacked bar plot of the most represented taxons.

Guidelines

If its your first time using a Linux environment, you should check a basic Linux tutorial first or ask for help to a colleague.

Materials

Software used in this specific workflow:

- A Linux/Mac terminal with Ubuntu 22.04.5

(In case you are using Windows: a Linux virtual machine will work (But you may need to manually install some dependencies that are installed by default in Linux/Mac)).

- R v. 4.4.2

- R Studio v. 2024.04.2

- Perl v 5.32.1

- Porechop v. 0.2.4

- FastQC v. 0.11.9

- Chopper v. 0.8.0

- Parallel-meta 2 v. 2.4.1

- Metaxa2 Database v. 2.2.3

The R libraries used in this analysis are:

- RColorBrewer

- vegan

- tidyverse

- tibble

- cowplot

- ggh4x

- factoextra

- reshape2

- dplyr

- microbiome

- ggplot2

- ranacapa

- microViz

- microbiomeutilities

Troubleshooting

Safety warnings

Running Parallel-meta in a low-specs machine may cause trouble to the hardware.

Considerations

Software used in this specific workflow:

- A Linux/Mac terminal with Ubuntu 22.04.5

(In case you are using Windows: a Linux virtual machine will work (But you may need to manually install some dependencies that are installed by default in Linux/Mac)).

- R v. 4.4.2

- R Studio v. 2024.04.2

- Perl v 5.32.1

- Porechop v. 0.2.4

- FastQC v. 0.11.9

- Chopper v. 0.8.0

- Parallel-meta 2 v. 2.4.1

- Metaxa2 Database v. 2.2.3

- Apptainer v. 1.4.0

- Taxonkit v.0.18.0

The R libraries used in this analysis are:

- RColorBrewer

- vegan

- tidyverse

- tibble

- cowplot

- ggh4x

- factoextra

- reshape2

- dplyr

- microbiome

- ggplot2

- ranacapa

- microViz

- microbiomeutilities

Software

R programming language

NAME

The R Foundation

DEVELOPER

REPOSITORY

SOURCE LINK

To install any library you need to use the following command in the R terminal:

Command

new command name

is very computational demanding.

If you dont't run Parallel-meta2 in a high-end computer or in a cluster, consider that the processing may take a long time.

When the commands in this tutorial have a name (The ones that end with ".sh" or ".pl"), you need to download them and run the command in your terminal.

If the command doesn't have a name, then you can simply copy and paste it directly at the terminal.

A sample data set to follow along this protocol can be obtained in the following repository:

Pre-processing data

IMPORTANT: If your data isn't coming from a Nanopore sequencer, and you already have the fastqs, skip to "Primer Cleaning".

After you extract the fastQ files from the MinION device, locate the files in the drive in your machine.

Note

The MinION device gives you the option to automatically "base call" the reads, meaning it does the signals interpretation as nucleotides. In case you didn't select that option or want to manually do the base calling, then you can do it using a software like Dorado for the task.

Available online at: https://github.com/nanoporetech/dorado

Copy only the desired barcodes files into your local PC. For example: the barcodes 12, 24, 36 and 48.

Command

new command name

Verify that the directories aren't empty and have the right amount of files (Relative to the the sequencing run time).

Note

The Nanopore sequencing will usually generate a lot of barcodes because of sequencing errors. But this can be easily identified by entering the non-desired directories and checking the number of files and the number of lines in each file.

For example:

The line count for the files in the barcode02 goes from 1 to 9.

The line count for the barcode12 goes from 2,835 to 2,659,653

Inside each file you can check this lengths using the command:

wc -l *

Optional: Verify that the files are actual Nanopore reads.

Command

new command name

The result should look like this:

@90c28ef6-45c8-4631-8851-2a67f74df137 runid=de32243e9f191a1a220f17ceaf869f23af588111 read=30 ch=324 start_time=2024-06-14T08:27:37.190699-06:00 flow_cell_id=FAW16131 protocol_group_id=Sigsbee24 sample_id=1 barcode=barcode12 barcode_alias=barcode12 parent_read_id=90c28ef6-45c8-4631-8851-2a67f74df137 [email protected]

GCCGTCCATGGCCGCCGTTCCAGTTACGTATTGCCAATTGCTGCAGGTAGAAAACAGAATCGGATTAACCTACTTGCCTGTCGCTCTATCTTCCGGTTACCTTGTTACGACTTCCACCCCAGTCATGAACCACACCGTGAAATCGTCCCTCCCGAAGGTTAGACTAACTACTTCTGGTGCAATCCCGCACTCCCATGGTGTGACGGGCGGTGTGTACAAGGCCCGGGAACGTATTCACCGCGACATTCTGATTCGCGATTTTAAGCGATTCCGACTTCGGAGTCGAGTTGCAGACTCCGATCCGGACTACGACGCGTTTTAAAGGGATTGGCTCACTCTCGCGAGTTGGCAGCCCCTCTGTACGCGCCATTGTAGCACGTGTGTAGCCCCTGGCCGTAGGGTGACCTGACGTCATCCCCACCTTCCCCTCGGTTTGTCACCGGCAGTCTCCCTGGAGTTCTCAGCATTACCTGCTAGCAACCAGGGATAGGGGTTATACGACACGAGCTGACGACGGCCATGCAGCACCTATCCCTGGTTGCTGGCAGGCTCGGCGTTGCACCTAAATGACCTGCTCTTGGCTCCGCCTCTTCCGTGGCTGGCTGTCGCGAATCATCACCCATTCCTTCAGATGGTACTGAAATGACCAGTCGGAACATTTCCCGGTGTGGTGTCATGTTCATGACCGAATTCTGCCTAACTACTACAGCTTTCAACTTC

Concatenate all the files.

Note

Make sure you are inside the correct directory and name the concatenated output accordingly.

Command

new command name

Once you have them all concatenated with each file being named after the barcode, store them into the same directory.

Primer cleaning

Proceed to removing the Nanopore adapter sequences from our files.

Install the following software.

Software

Porechop

NAME

CentOS 7.4.1708

OS

Ryan Wick

DEVELOPER

REPOSITORY

SOURCE LINK

Run this loop. Which iterates over all the fastq files and removes the adapters, generating for each an output with the "pc" name, meaning that they have been through Porechop.

Command

Run Porechop

Quality check

To assure the quality of the reads, a QC step is necessary.

Install the following software:

Software

FastQC

NAME

Simon Andrews

DEVELOPER

Run the following command.

Command

new command name

Now check your quality results and consider if the length of your results is adequate, the quality and length of the reads.

Note

If your reads are not bigger than 1,000 bp those may not be complete 16S reads.

If your reads are bigger than 2,000 bp, those may be 16S chimeras.

Sequence filtering

Filter your sequence length with Chopper.

Software

Chopper

NAME

Ubuntu 22.04

OS

Wouter De Coster

DEVELOPER

REPOSITORY

SOURCE LINK

Here, we are selecting the sequences that have a have a minimum length of 1000 and a maximum length of 2000. These lengths were selected because they are within the common lengths of 16S genes.

Command

new command name

Note

The filters can be variable. Depending on the quality and lengths you are looking for. This is just an example of how to filter with these parameters.

If desired, a quality filter can be done with the option:

--quality 6

(In this example, we use reads with a Phred score above 6 )

We did not consider quality in this protocol because Parallel-Meta 2 has an internal filter that removes sequences that do not resemble 16S genes.

Optional: After this step run FASTQC again, to ensure that chopper worked.

Running parallel-meta

Now run the taxonomical analysis with Parallel-Meta 2, and the database Metaxa2.

Install the following software:

Software

Parallel-Meta

NAME

CentOS 7.4.1708

OS

Xiaoquan Su

DEVELOPER

As this software is no longer available, an image was uploaded here:

The image can be run with Apptainer, which can be downloaded here:

Then, this is how it can run:

Command

new command name

Download the DB Metaxa2. Assing as "X" in the database index.

Unzip your data before using parallel-meta.

Command

new command name

Run the following command.

Note

This process is very computational demanding. Make sure to run it in a powerful enough computer or ask assistance from the computer expert in your research center.

A cluster with a scheduling system is highly recommended.

Command

new command name

The previous step generates a directory for each barcode/sample.

Data parsing

For the next step, we need to make a loop that produces a count matrix for each classification file.

Now we edit the name of each file with the sample name, making sure each has a the termination "classification.txt"

First, make sure to be in the directory that contains all the directories created in the .

After, create a file named classification_list in which all the paths to the classification files are saved.

Command

new command name

Now, we use this command to make the loop and prepare the count matrix for each classification file.

Command

count.matrix.sh

Now we do the same that in step 23, making now a path list file but for each matrix.

Command

new command name

Command

matrix.integrator.perl

Run with the following

Command

new command name

Next, we filter the whole table to delete the hits for eukarya, mitochondria, chloroplast and other lines that we dont need.

Note

As we are only focused in 16S diversity, we are removing the chloroplast, mitochondria and eukarya sequences.

Command

new command name

Command

taxa_levels.pl

And its run adding "counts" at the end.

Command

new command name

We format the data for phyloseq.

Command

new command name

As metaxa2 database has some outdated taxas, we will use the software Taxonkit in order to update them.

Command

new command name

After this step, its necessary to check for the taxons that weren't detected by taxonkit.

Command

new command name

Manually look up for the species name in the Taxonomy browser from NCBI (https://www.ncbi.nlm.nih.gov/Taxonomy/Browser/wwwtax.cgi) and change the species name in the 8th column to the current one in the taxonomy_table.txt.

In the example above, the species Pseudomonas alcaligenes needs to be changed to: Aquipseudomonas alcaligenes

After all the species names are corrected, run again and to make sure that all the taxons were successfully corrected by Taxonkit.

Then, run again this command:

Command

new command name

Then, transform the previous output into the taxonomy table file.

Command

new command name

Now we create a file named metadata.txt with the following structure example (each column must be separated by a tab):

SampleID Origin

ID1 Water

ID2 Sediment

ID3 Soil

ID4 Saliva

A metadata file can be created with the following command (note: you can add as many meta information as necessary, as shown in the example above):

Command

new command name

...

Diversity analysis

As a result, we should have 3 files:

otu_table.txt

taxonomytable.txt

metadata.txt

Now paste this command in the R Studio file.

Be sure to add the working directory you are in before running it in this part: setwd("/PATH").

The code should be copied into an Rstudio session, so each line is ran individually. This is because some lines need to be modified.

For each abundance, search for the following 4 lines:

Command

new command name

For each, run the first line to define the cutoff of your relative abundance.

The second line will tell how many taxas are considered after your cut off. Try to use a number between 40-60 to avoid having too many or to little taxas.

You can register this number in the third line.

And in the fourth line register this number in a variable to be used in the plot.

Also, in the line:

Command

new command name

Replace "Origin", "Depth" and "Platform" with your metadata.

Command

new command name

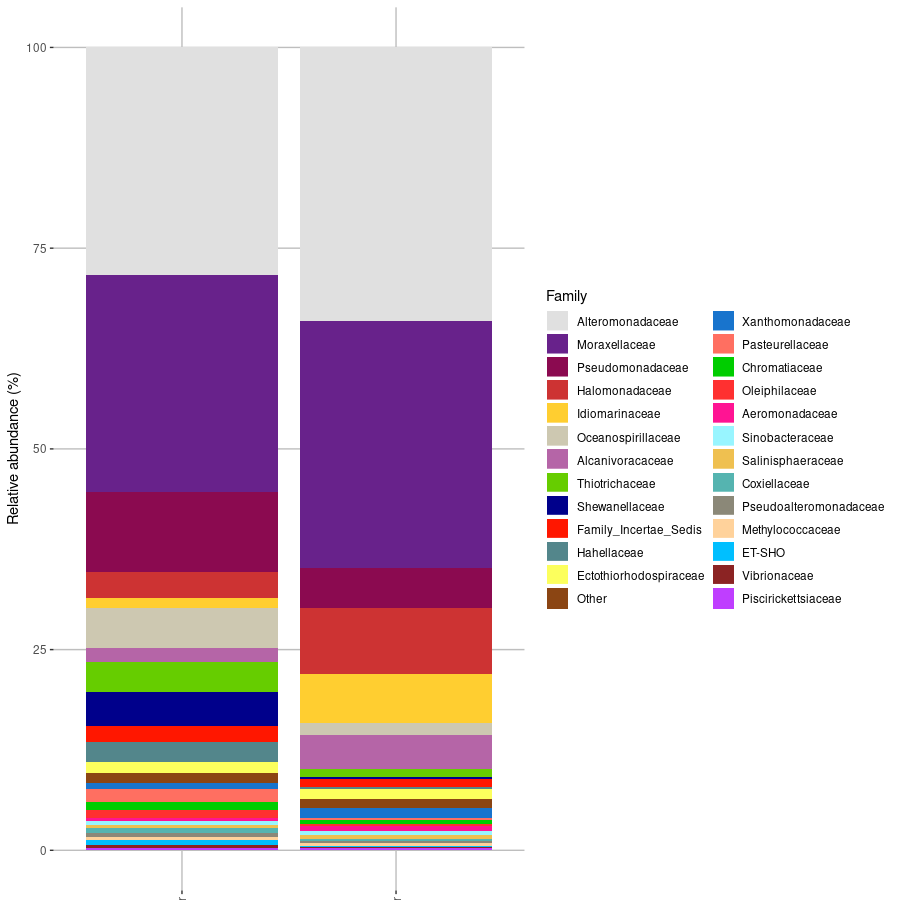

This is the results for the family levels taxa, but stacked bar plots can be made in different taxa levels.

Expected result